Containers are now an integral component of the DevOps architecture.

Developers often see them as a companion or alternative to virtualization. As containerization matures and gains traction because of its measurable benefits, it gives DevOps a lot to talk about.

What is containerization?

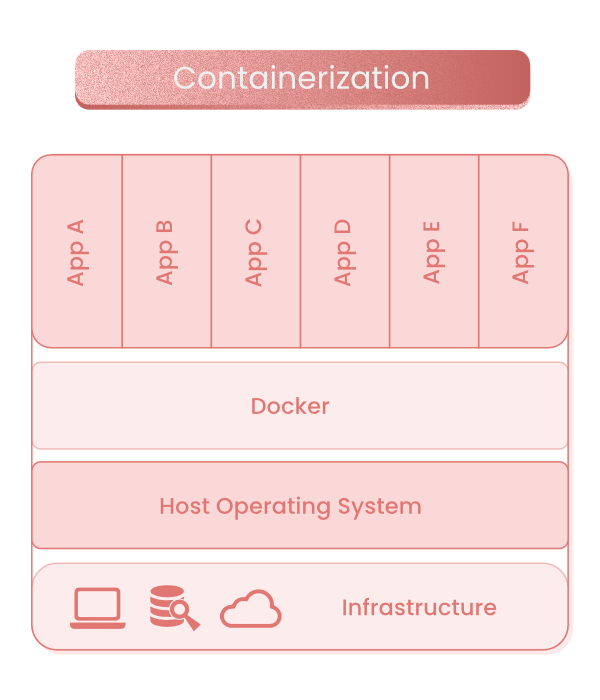

A container is a software application’s executable unit that encapsulates the application code, along with its dependencies (config files, libraries, frameworks, and so on), to run the app on any IT infrastructure. Transforming an application into its isolated, abstracted form is known as containerization.

Simply put, containerization allows an application to be written once and run anywhere.

How are containers different from virtual machines?

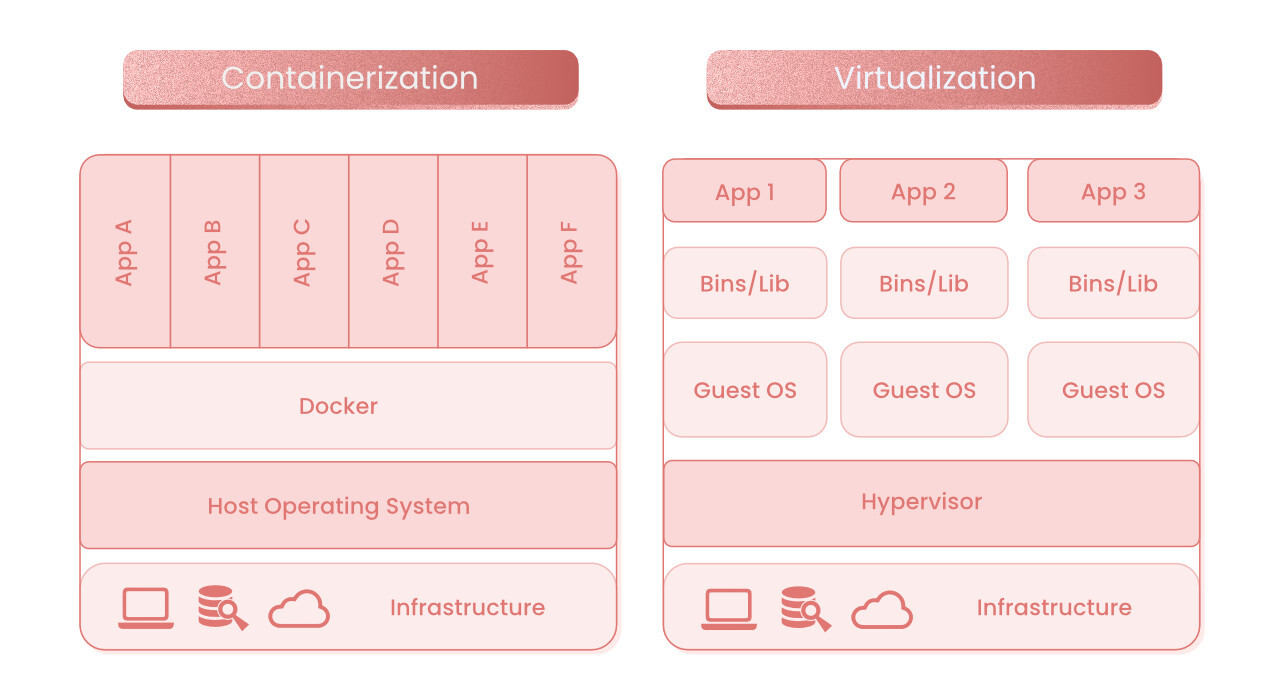

Containers and virtual machines (VMs) can be similar, especially since they’re based on virtualization technologies. However, they’re not quite the same.

The primary difference is that containers virtualize operating systems (OS), not the underlying hardware, while VMs virtualize physical hardware through a hypervisor

Further, every VM has access to a guest OS’s full copy, as well as the application and its dependencies. However, a container only packages the application, its libraries, and dependencies.

The guest host’s absence makes containers a more compact form of a VM. They’re faster, lighter, and more portable. Containers also support a microservice architecture where the application component is built, deployed, and scaled with greater control and resource efficiency.

Containers are undoubtedly better than VMs. It’s not surprising that IT professionals prefer containers to VMs.

Benefits of containerization

Containerization’s benefits are pretty evident as containers provide better functionality and application support. They help developers build highly flexible and scalable products while eliminating inefficiencies. Below are the major benefits of containerization.

1. Increased portability

Containerization produces executable software application packages abstracted from the host operating system. As a result, an application’s performance isn’t tied to or dependent on the OS. The resulting application is far more portable as it can run consistently, reliably, and uniformly across all platforms (Linux, Windows, or even cloud).

2. Boosted agility

As a platform-agnostic solution, containers are decoupled from any dependencies. Development teams can easily set up and use containers regardless of the OS or platforms.

The development tools are universal and easy to use, which further drives the rapid development, packaging, and deployment of containers on all operating systems. This allows DevOps teams to leverage containers and accelerate agile workflows.

3. Greater speed

Since containers share a machine’s OS, they’re not overburdened by excess overheads. This lightweight structure leads to higher server efficiency while speeding up the start time by a few notches. The higher speed and operational excellence also lower the server and licensing costs.

4. Fault isolation

Each container application is isolated and operates independently. This localizes and makes it easy to identify any container faults or failures. While a DevOps team addresses a technical issue, the remaining containers can operate without downtime.

5. Higher efficiency

Containers have a smaller capacity than VMs, load quickly, and have a larger computing capacity. These characteristics make containers more efficient, especially in handling resources and reducing server and licensing costs.

6. Improved security

Containerized applications’ isolated functioning mitigates a compromise’s gravity during a security breach. Even if malicious code penetrates the applications, the container vacuum protects the host system from widespread infections.

Additionally, development teams can define security permissions that control access and communication while identifying such spurious components and immediately blocking them once flagged.

7. Resource maximization

Containerization enables configurable requests and limits various resources, such as CPU, memory, and local storage. Most of these are internally monitored requests and limitations.

Whenever a function overuses resources, it’s terminated instantly. As a result, containerization allocates resources proportionally based on the workload and upper ceilings. This helps with granular optimization.

8. Ease of management

Container orchestration platforms like Kubernetes automate containerized applications and services’ installation, management, and scaling. This allows containers to operate autonomously depending on their workload. Automating tasks such as rolling out new versions, logging, debugging, and monitoring facilitates easy container management.

How are Docker and Kubernetes related to containers?

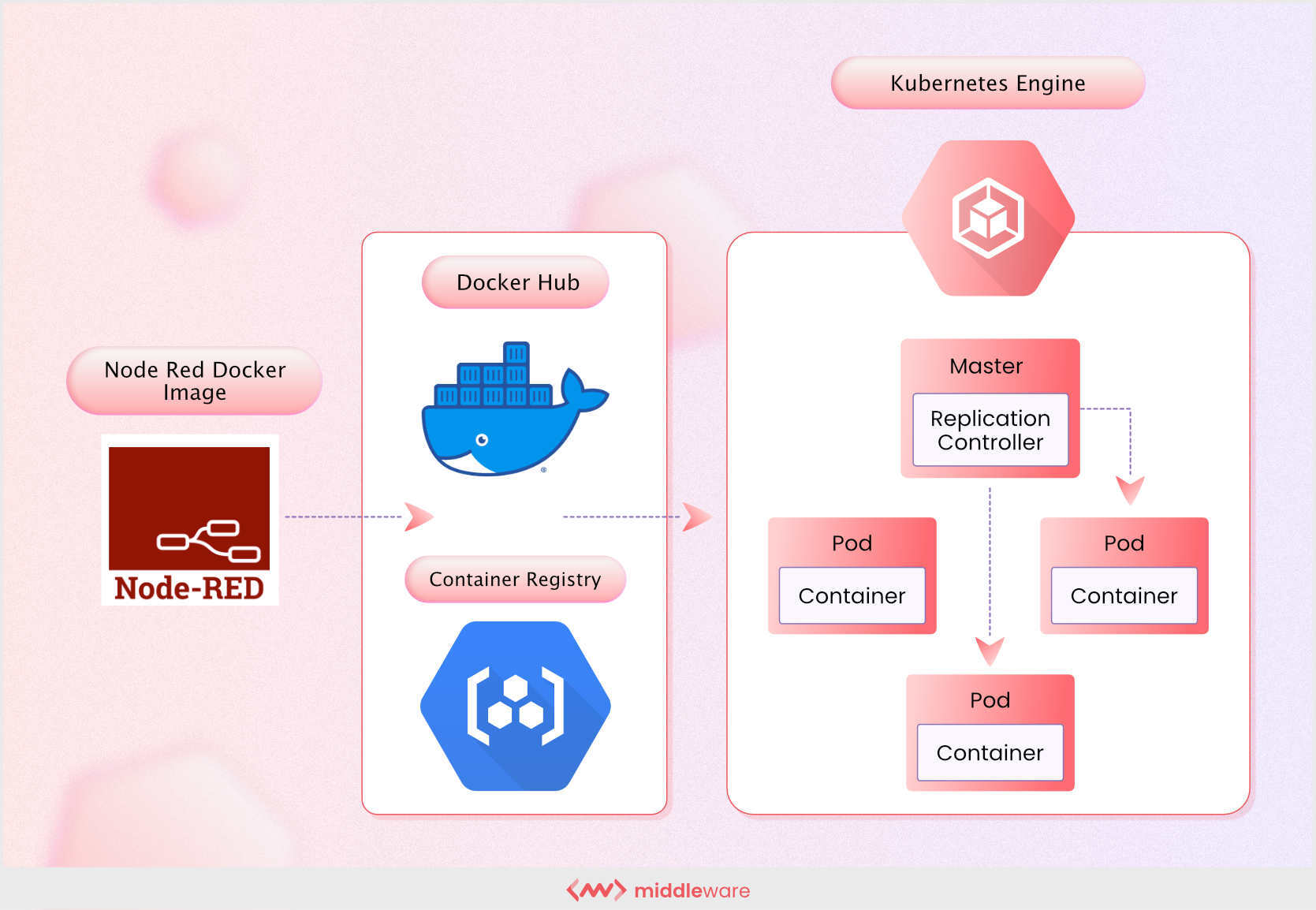

Docker and Kubernetes are popular container technologies, commonly compared and selected based on their capabilities. However, both are fundamentally similar as they enable seamless functioning. Docker is an open-source containerization platform. It’s essentially a toolkit that makes containerization easy, safe, and quick. It’s currently the most popular container deployment tool.

Docker works well with small applications. However, it doesn’t offer the same functionality for large enterprise applications that need hands-on management. This is where Kubernetes enters the picture.

Kubernetes is an open-source container orchestration platform. It schedules and automates deploying, managing, and scaling containerized applications. Kubernetes also plays a significant role in service discovery, load balancing, self-healing, storage management, and automated rollouts and rollbacks.

Docker and Kubernetes are distinct, standalone technologies. At the same time, they complement each other well and can form a powerful combination.

Docker produces the containerized piece that allows developers to package applications into containers through the command line. These applications can operate in their respective IT environment without compatibility issues.

When demand increases, Kubernetes can orchestrate the Docker containers’ automatic scheduling and deployment for adequate availability.

Simply put, Kubernetes forms a symbiotic relationship with Docker to make the container infrastructure robust and scalable without compromising availability.

Where can you use containerization?

Here are some of the most common use cases of containerization:

- The “lift and shift” of existing applications in modern IT environments, such as the cloud-

- Leveraging the microservices architecture by setting up different containers operating together for use and decommissioning as necessary-

- Creating databases with containers as building blocks. Containerizing database shards converts monolithic databases into their modular counterparts.

- Refactoring existing applications for containers to virtualize OS while enjoying the benefits of the modularity and independence of containers

- Providing DevOps support according to the Continuous Integration and Continuous Deployment (CI/CD) principles for streamlined building, testing, and deployment-

- Simplifying deploying repetitive jobs and functions, such as ETL tasks or batch jobs.

- Developing new container-native or cloud-native applications-

Containerize and more

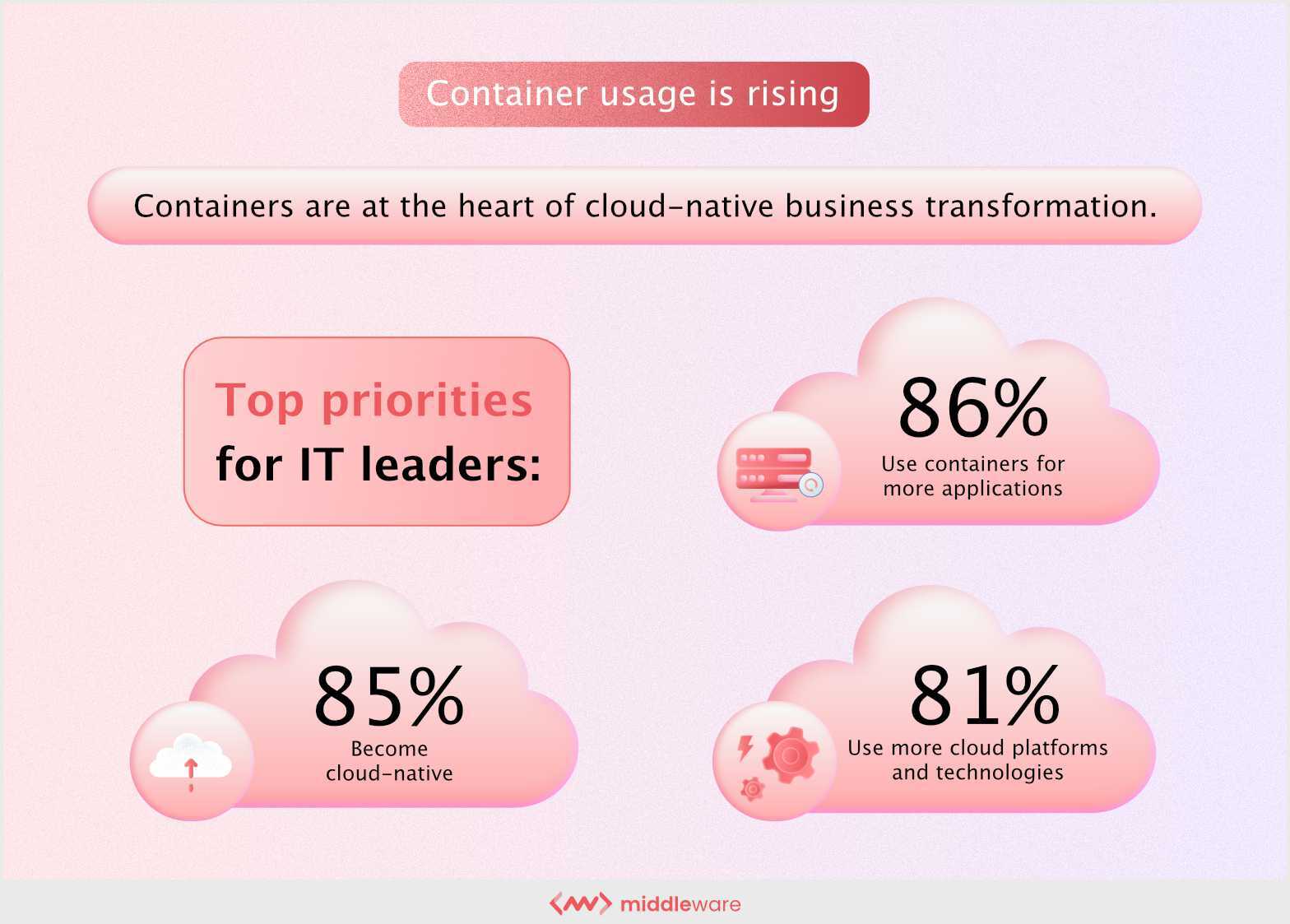

Containerization offers a wide range of benefits, including architectural modularity, application responsiveness, fault isolation or failure prevention, and platform independence. That’s one of the major reasons why container usage is rising globally with a positive growth of over 30% year-over-year.

Using Docker and Kubernetes parallelly can expand the containerization capabilities and amplify the results.

You can also use an agile solution like Middleware to simplify migrating complex application architectures to containerized microservices with a no-code automated container.