DevOps streamlines software development and IT operations, enabling faster software delivery and high-quality service. However, a successful DevOps implementation requires the right DevOps tools to automate processes, enhance collaboration, and optimize workflows.

DevOps isn’t a single tool but a practice that integrates various DevOps automation tools, CI/CD tools, orchestration tools, and DevOps pipeline tools to improve efficiency.

This article highlights the 15+ essential DevOps tools for 2026, covering key areas such as DevOps monitoring tools, configuration management tools, DevOps security tools, observability tools, and testing tools.

Whether you’re looking for popular DevOps tools, AI tools, or version control tools, this list will help your team select the best solutions.

What are DevOps Tools?

DevOps tools are applications and platforms that help implement DevOps methodologies, workflows, and automation to improve collaboration between development and operations teams. These tools facilitate automated workflows, enhance continuous integration (CI) and continuous delivery (CD), and optimize the software development lifecycle (SDLC).

They cover all phases of SDLC, including planning, development, testing, deployment, monitoring, and security, ensuring better and more reliable software products. Organizations use DevOps tools to achieve faster time-to-market, improved product quality, and greater responsiveness to customer needs.

These tools enable DevOps automation, CI/CD, infrastructure as code (IaC), DevOps monitoring, configuration management, observability, security, and team collaboration, forming a complete DevOps strategy.

How to choose the right DevOps tools?

Choosing the right DevOps tools is vital for enhancing collaboration, workflow automation, and speeding up the software development lifecycle. Here are the things you must consider before making a decision:

Define Your Goals: Place clear lines on your DevOps goals such as how often you will deploy, lead time reduction, improving system reliability, and the like. Chalk out your goal reads the DevOps automation tools that suit your organization.

Examine Project Demands: Look into the specific requirements your projects demand, from technology stack and application architecture to deployment environment. Check that the DevOps tools you select are available on your platform and can withstand the complexity of your applications.

Assess Integration Capability: DevOps pipeline tools must integrate seamlessly across CI/CD, version control, testing frameworks, configuration management, observability, and monitoring tools to ensure smooth workflows.

Assess Team Skill Levels: Choose tools with a manageable learning curve that match your team’s expertise. Providing training resources ensures a smooth transition when adopting new DevOps methodologies and tools.

Analyze Cost and Licensing: Define your budget when determining DevOps tools, then take up front and ongoing costs, including licensing fees, maintenance, and support costs. Get the total cost of ownership and match it with the value delivered from the tool to the organization.

Choose Scalability and Flexibility: Select DevOps platform tools that can scale with your organization’s growth and adapt to evolving DevOps workflows. Tools that support customization and different deployment models provide long-term benefits.

Pay attention to Security and Compliance: Choose DevOps security tools with features like role-based access control (RBAC), audit logs, vulnerability scanning, encryption, and compliance adherence to maintain security and regulatory standards.

Inquire about Being Supported by the Community and Vendor: Take into consideration the support availability from the community or vendor with the DevOps automation tools. A vibrant community with the most extensive documentation and the fastest available responsiveness on support channels will go a long way to make your team more efficient in seeking solutions to problems.

Trial Before Ramping Up: Before putting in at the deep end a tool in your DevOps pipeline, run a small pilot test before full integration to evaluate its performance against existing practices. This method allows the identification of issues to be anticipated with a Devops pipeline tools while also assessing the return it can bring in a controlled environment.

With a careful examination of each of these things, you will ultimately come up with a DevOps toolchain that greatly automates work while fostering collaboration, as well as aligning with your organization while delivering quality software speedily.

DevOps lifecycle phases with tools

The DevOps lifecycle typically includes planning and development, continuous integration and testing, and deployment and monitoring.

1. Continuous Development and Continuous Integration (CI/CD)

Continuous integration (CI) is a method of automating the integration of code changes from various contributors into a single software project. Continuous delivery (CD) produces software that can be deployed readily/continuously as required. CI/CD tools help create pipelines to standardize the releases.

Here are the top three continuous integration/continuous delivery tools:

1. Jenkins

Jenkins is an open-source DevOps continuous integration and delivery platform used to automate the end-to-end release management lifecycle. By integrating with a wide range of DevOps testing and deployment tools in DevOps, Jenkins enables developers to deliver software continuously.

Jenkins is one of the essential DevOps tools because of its features:

- It is a platform-agnostic, self-contained Java-based program that’s easy to install & run because of its packages for Windows, Mac OS, and Unix-like OSs.

- It is an open-source DevOps automation tool backed by strong community support.

It offers multiple integrations with DevOps pipeline tools for seamless workflow automation.

2. AWS CodePipeline

Yes, we know AWS is not a popular name in the CI/CD tools list, but we have still included it because of its robust applications & use cases. A DevOps pipeline can be built using AWS CodePipeline, which utilizes a continuous delivery service module that models, visualizes, and automates all the steps required for a software release.

Features that make AWS CodePipeline a good CI/CD tool:

- The tool offers highly customized workflow modeling, making it a strong DevOps automation tool.

- You can integrate your own custom plugins into the system, improving DevOps orchestration.

AWS CodePipeline allows you to create notifications for events impacting your DevOps pipeline and receive alerts in case of a failure or issue.

3. CircleCI

CircleCI is a continuous integration and delivery platform that enables development teams to automate the build, test, and deployment processes, helping them release code more quickly. The tool can be set up to efficiently run extremely complex DevOps pipelines using caching, Docker layer caching, resource classes, and many other features.

Some of its key features are:

- Offers role-based access to users, Admins, non-admins, etc.

- Automated testing tools

- The tool uses parallelism, which reduces overall build time in DevOps CI/CD pipelines.

Offers role-based access control (RBAC) to users, including Admins, non-admins, and other team members, improving DevOps security.

4. Pieces

Pieces is an all-in-one AI-powered coding assistant that supercharges developer productivity by reducing context switching and more. It lets you save, search, reuse, and enrich code snippets across your toolchain, with a centralized copilot that learns from your work. Pieces supports both cloud-based LLMs like GPT-4 and Gemini, and on-device models like Llama 2 – giving you speed, control, and privacy.

What really sets Pieces apart is its Long-Term Memory (LTM-2). It remembers what you worked on, where, and with whom – across apps, docs, and chats – so you can ask things like, “What was I doing before my meeting?” or “Summarize that doc I read 3 months ago.”

Its offline-first architecture ensures data privacy, and you control exactly what’s captured over 9 months. With IDE/browser plugins, multimodal input (yes, even screenshots), Git integration, and automated documentation, Pieces isn’t just an AI tool – it’s your personalized developer memory. And it’s completely free for individuals.

2) Continuous testing

The testing phase of the DevOps lifecycle follows, in which the developed code is tested for bugs and errors that may have made their way into the code. This is where quality analysis (QA) comes into play to ensure that the developed software is usable. The QA process must be completed successfully in order to determine whether the software meets the client’s specifications.

Continuous testing is accomplished through automation tools such as JUnit, Selenium, and TestNG, which allow the QA team to analyze multiple code bases simultaneously. This ensures that the developed software has no flaws in its functionality.

DevOps Automated Testing Tools

Automated testing is a software testing technique that executes a test case suite using specialized automated testing software tools. It double-checks software functionality to ensure it performs exactly as designed, making it an essential part of DevOps testing tools.

Here are the three best-automated testing tools:

1. LambdaTest

LambdaTest is a cloud-based automation testing & test orchestration platform that simplifies and enhances continuous testing for web applications. It offers a range of features that empower developers and testers to ensure optimal functionality and compatibility across various browsers and devices.

It supports a range of automated frameworks like Selenium, Cypress, Playwright, and Appium, among others.

Some of its key features are:

- Cross-browser and cross-platform testing

- Parallel testing

- LambdaTest offers seamless collaboration features, allowing team members to share test results, collaborate on bug fixes, and streamline debugging.

2. Snyk

Snyk is an open-source security platform that Integrates directly into development tools, workflows, and automation pipelines and checks to find out vulnerabilities in the source code of an application.

Some of its key features are:

Deployment management features allow you to validate the changes before they are pushed to production environments.

Some of its key features are:

- It provides real-time analytics of issues/bugs in your system.

- Multi-language scanning capabilities.

- Deployment management features allow you to validate changes before they are pushed to production environments.

3. Mend (formerly WhiteSource)

Mend (formerly WhiteSource) easily secures what developers create. The tool frees development teams from the burden of application security, enabling them to produce high-quality, secure code more quickly.

Some of its key features are:

- The automated remediation feature creates pull requests so that developers can update their custom code to fix security problems.

- Supports 27 programming languages and frameworks.

- Robust Integration capabilities.

3) Continuous monitoring

Continuous monitoring is a phase of operation in which the goal is to improve the overall efficiency of the software application. Furthermore, it monitors the app’s performance. As a result, it is one of the most important stages of the DevOps lifecycle.

During the continuous monitoring phase, various system errors such as server not reachable,’ ‘low memory,’ and so on are resolved. It also ensures the services’ availability and security. When network issues and other problems are detected, they are automatically resolved during this phase.

The operations team monitors user activities for inappropriate behavior using tools such as Middleware, Datadog, and Dynatrace. As a result, developers can proactively check the system’s overall health during continuous monitoring.

Infrastructure and server performance monitoring tools

Infrastructure monitoring is the process of collecting and analyzing data about a system or application. Server monitoring is the process of gaining visibility into the activities on the servers, both physical and virtual.

Here are the three best Infrastructure monitoring & server performance monitoring tools

1. Middleware

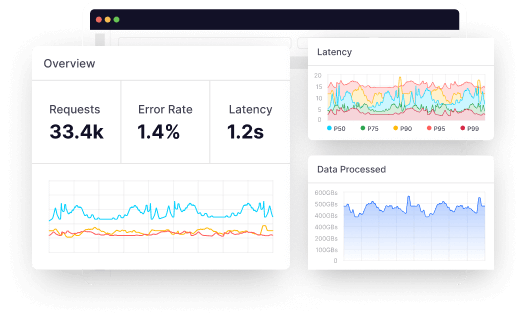

Middleware is a cloud-native observability platform that also supports Infrastructure monitoring. The tool provides the DevOps team with real-time visibility into on-premises and cloud deployments. It also allows teams to view the overall health status of their infrastructure by monitoring applications, processes, servers, containers, events, databases, and more.

The tool also has container infrastructure monitoring capabilities that allow you to monitor your Kubernetes or Docker applications, making it a full-stack infrastructure monitoring tool.

Features that make Middleware one of the best DevOps tools:

- Deep Infrastructure Visibility: It gives real-time insight into infrastructure performance by accessing thousands of metrics for active management.

- Fast Troubleshooting: It helps identify and resolve problems faster by correlating metrics, traces, and logs with just one click, consequently accelerating the troubleshooting process.

- Custom Dashboards: Let users customize the dashboards to meet their business requirements better, thus improving monitoring efficiency.

- Container Monitoring: It provides specialized capabilities to monitor containers in Kubernetes clusters or Docker containers for balancing the performance of microservices.

- Controllable Data Ingestion: The control of data ingestion is facilitated through metrics on and off toggling based on tags, which can help reduce observability costs, as well as get rid of noisy metrics.

- Real-Time Alerts: Alert settings on performance metrics allow for instant notifications, thereby significantly shortening the mean time within which issues are detected and allowing for quick mitigation.

Together, these features empower DevOps teams to ensure systems are performing optimally and remain reliable across a spectrum of environments.

2. Datadog

Datadog is an observability platform for cloud-scale applications that provides server monitoring, database monitoring, IT monitoring and various other services through its SaaS-based platform. It supports Windows, Linux, and Mac OS.

Some of its key features are:

- The tool provides a Host map that allows you to visualize all your hosts on one screen.

- Provides real-time monitoring.

- A decade-old platform that offers robust integration with almost all DevOps tools.

3. Dynatrace

Dynatrace is a monitoring platform that simplifies enterprise cloud complexity with observability, AIOps, and application security—all in one platform. This Infrastructure monitoring tool is fast and reliable and used by a wide range of industries.

Some of its key features are:

- The tool integrates with all major cloud providers and supports almost all technology

- Uses AI to predict and resolve issues before they impact the users.

- It offers Server-side service monitoring, as well as network, process, and host monitoring.

Incident Alert Management Solution

The incident alert management solution is a platform that aggregates high-priority alerts from tech stacks across cybersecurity, ticketing, monitoring and observability to deliver IT incidents to the right on-call engineer, no matter where they are.

Here are the three best Incident Alert Management tools

1. OnPage

OnPage is an Incident Alerting tool that mobilizes code owners when critical codes fail by delivering incident notifications as persistent, distinguishable alerts, empowering them to improve their incident response times and accelerate software delivery.

Their alerts can bypass the silent switch on all smartphones, and for extra redundancy, alerts can also be sent by SMS, email, and/or phone call.

Some of its key features are:

- Bi-directional integration with popular monitoring and ticketing tools.

- The ability to send high-priority alerts indicating urgent issues, and low-priority alerts for regular communication.

- On-call management with the ability to escalate alerts when the first on-call developer is unavailable.

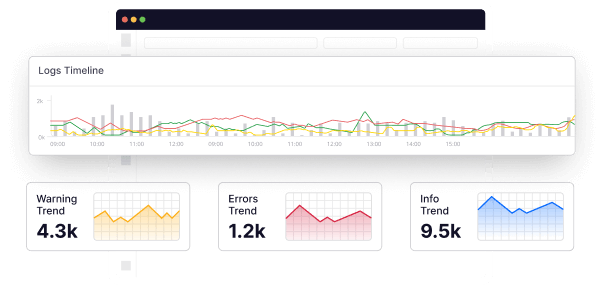

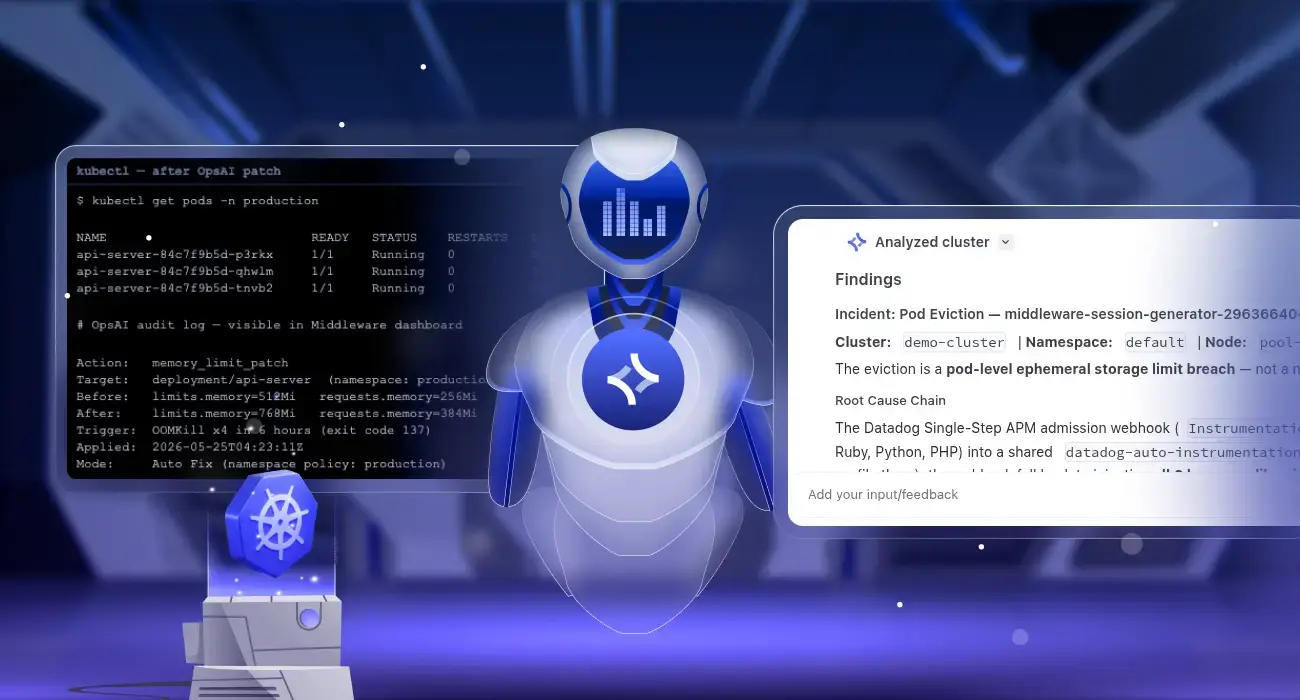

2. Middleware

Middleware, as we mentioned above, is a full-stack observability platform, and of its key features are alerts and Incident Management. The tool offers an AI-powered alert system that helps developers identify & respond to critical issues at the moment they occur, improving your MTTD & MTTR.

Middleware provides a complete Incident Alert Management Solution that improves the operational efficiency and IT responsiveness of IT operations. Some important features include:

- Root Cause Analysis and Correlation: Middleware automatically correlates related alerts and data, allowing teams to identify and fix the root causes of incidents more easily.

- Multi-Channel Notifications and Alert Assignment: Monitored alerts are sent to the right teams and channels to guarantee swift visibility and resolution.

- Integration Capabilities: With integration to 200+ tools like Slack and PagerDuty, Middleware raises alerts to preferred communication channels to keep teams informed in real-time.

- Alert Customization: To counter alert fatigue, Middleware gives users the flexibility to adjust alerting parameters so notifications go off only when there truly is an issue, minimizing nuisance noise.

- Performance Monitoring: The platform monitors infrastructure performance continuously, looking for signs of problems such as spikes in CPU, memory leaks, and disk bottlenecks so as to facilitate proactive issue resolution.

By adopting Middleware’s Incident Alert Management Solution, organizations can minimize downtime, enhance operational efficiency, and ensure prompt and effective resolution of critical issues.

3. Better Uptime

Better Uptime is an incident management tool by Betterstack that allows businesses to monitor websites, manage incidents, and share system status via status pages with customers.

It can be used to log incidents on an audit timeline and interact with team members to solve downtime or incidents with much speed.

They can send alerts about critical events across teams by phone calls, SMS, emails, Microsoft Teams, Slack, and push notifications.

Some of its key features include:

- It has a simple and straightforward interface but gives you a wide range of functionality

- The On-call schedule calendar alerts the right people at the right time and configures on-call duty rotations.

- It allows easy integration with third-party systems like New Relic, Grafana, and others.

4. Continuous deployment

Continuous deployment (CD) ensures easy product deployment without compromising application performance. During this phase, ensuring that the code is precisely deployed on all available servers is critical. This process eliminates the need for scheduled releases while also speeding up the feedback mechanism, allowing developers to address issues more quickly and accurately.

Through configuration management, containerization tools aid in continuous deployment. A container platform is a software that creates, manages and secures containerized applications. Container management tools enable easier & faster networking and container orchestration.

Container management tools

Container management platforms handle a number of containerized application processes, such as Governance, automation, extensibility, security, and support. Here are the three top container management tools:

1. Docker

Docker is an open platform that uses OS-level virtualization for developing, shipping, and running applications in packages called containers. A docker image is a standalone, executable package that contains all the components required to run a program.

Some of the key features that make it a good DevOps tool:

- Developers collaborate, test, and build using Docker.

- Distributed applications can be packaged, run, and managed using Docker Apps.

- Docker Hub offers millions of images that come from trusted publishers and the community.

- Standardized packaging format that runs on a variety of Linux and Windows Server operating systems.

2. Kubernetes

Kubernetes (also called k8s or “kube”) is an open-source container orchestration platform that automates many of the manual processes in setting up, running, and scaling containerized systems. K8s is one of the most popular container management tools in the DevOps community.

Features that set it apart from other DevOps Tools are:

- The tool offers automated rollouts & rollbacks.

- Automatically adjust the resource utilization of containerized apps.

- K8s uses a declarative model that allows it to maintain the defined state and recover from any failures.

3. OpenShift

OpenShift (by Red Hat) is an open-source, cloud-based Kubernetes management platform that helps developers build applications at scale. It provides automatic installation, upgrades, and life cycle management for the operating system, Kubernetes and cluster services, and applications throughout the container stack.

Some of its key features are:

- Apps running on OpenShift can scale to hundreds and thousands of instances across thousand of nods per second.

- The tool follows the open-source standards set by the Open Container Initiative (OCI) and Cloud Native Computing Foundation (CNCF).

- It allows automated installation and upgrades across all popular cloud and on-premise stacks.

Infrastructure as code tools

Infrastructure as code, or IaC, is an IT methodology that controls and codifies the underlying software-based IT infrastructure. Instead of manually configuring individual hardware devices and OSs, IaC enables development or operations teams to automatically manage, monitor, and provision resources.

Infrastructure as code tools help configure and automate the provisioning of the underlying infrastructure. Here are the three best Infrastructure as code tools:

1. Terraform

Terraform is an open-source, Infrastructure as a Code tool that helps Devs build, alter, and enhance their infrastructure without manually provisioning or managing it. With Terraform, you can manage AWS, Azure, Google Cloud, Kubernetes, OpenStack, and other popular Infrastructure providers.

Features that set Terraform apart from other DevOps Tools are:

- Terraform lets you create a blueprint of your infrastructure in the form of a template that can be versioned, shared, and re-used.

- You can implement complex changes to your infrastructure with almost no human interaction.

- Open-source, highly scalable, and fairly priced tool that offers almost all paid features to small teams for free.

2. Puppet

Puppet is a system management tool that centralizes and automates configuration management. This open-source configuration management software is commonly used for server configuration, management, deployment, and orchestration throughout the whole infrastructure for a variety of applications and services.

Some of its key features are:

- Puppet offers idempotency, which means one can safely run the same set of configurations multiple times on the same machine and get the same results.

- Puppet can be integrated with Git (version control tool) to store puppets code helping your team gain the benefits of both DevOps and agile methodology.

- Using the Resource Abstraction Layer (RAL), coordinating the specified framework design is possible without worrying about the execution’s subtleties or how the arrangement order will work within the framework.

3. Progress Chef

Progress Chef (formerly Chef) is a robust Infrastructure management automation tool that ensures that configurations are applied consistently in every environment. This tool allows DevOps teams to define and implement safe and scalable infrastructure automation across any cloud, virtual machine, and/or physical infrastructure.

Some of its key features are:

- The tool can run in a client/server mode or in a standalone configuration mode named “chef-solo.”

- The self-service tool allows agile delivery teams to provision and deploy infrastructure on-demand without any dependencies.

- Wide range of third-party integrations.

5. Continuous feedback

Continuous feedback is required to determine and analyze the application’s outcome. This establishes the tone for improving the current version and releasing a new version based on feedback from stakeholders.

Only by analyzing the results of software operations can the overall process of app development be improved. Feedback is simply information obtained from the client’s end. Information is important in this context because it contains all of the data about the software’s performance and related issues. It also includes suggestions made by software users.

6. Continuous operations

The final stage of the DevOps lifecycle is the shortest and most straightforward. Continuity is at the heart of all DevOps operations, allowing developers to automate release processes, detect issues quickly, and build better versions of software products. Continuity is essential for removing distractions and other extra steps that impede development.

Continuous operations have shorter development cycles, allowing organizations to advertise constantly and reduce the overall time to market the product. DevOps increases the worth of software products by making them better and more efficient, attracting new customers.

Conclusion

We hope this DevOps tools list will help you accelerate your DevOps journey. We at Middleware firmly believe that the open toolchain approach is the best approach because it allows the primary DevOps tool to be customized with third-party tools according to the organization’s unique needs.

That’s why our cloud-native observability platform has 50+ integrations that allow you to create custom workflows and unified dashboards as per your business-specific requirements.

Sign up to see this cloud-native DevOps tool in action!

FAQs

What are DevOps tools?

DevOps tools are a set of tools that helps organizations effectively adopt the DevOps methodology across the multiple phases of the software development lifecycle

Which are the best DevOps tools?

Here is a list of the best DevOps tools:

- Middleware

- Datadog

- Jenkins

- Snyk

- Xray

- Terraform

- Puppet

- Docker

- K8s

How to choose the best DevOps tools?

You should consider the following factors while choosing the best DevOps tools:

- Price

- Support for CI/CD

- Integration with other tools

- API support

- Cross-platform support

- Customization capabilities

- Monitoring and analytics features

- Cloud-native

- Customer support

Why do we need DevOps tools?

DevOps tools are essential for streamlining and automating the processes involved in software development, testing, deployment, and operations. They help with automation, testing, monitoring, releasing fast deployments, etc.

![15+ Best DevOps Tools To Look For in 2026 [Updated]](https://middleware.io/wp-content/uploads/2025/04/Best-DevOps-Tools.jpg)